AN ARTIFACT-FREE WAVELET MODEL FOR PERCEPTUAL

CONTRAST ENHANCEMENT OF COLOR IMAGES

Edoardo Provenzi and Vicent Caselles

Department of Information Technology, Universitat Pompeu Fabra, C/ T`anger 122-140, Barcelona, Spain

Keywords:

Contrast Perception, Wavelets, Color.

Abstract:

Contrast enhancement of color images can prove to be a difficult task because artifacts and unnatural colors

can appear after the process. In this paper we propose a wavelet-based variational framework in which contrast

enhancement is obtained through the minimization of a suitable energy functional of wavelet coefficients. We

will show that this new approach has certain advantages with respect to the usual spatial techniques sustained

by the fact that the wavelet representation is intrinsically local, multiscale and sparse. The Euler-Lagrange

equations of the model are implicit equations involving the detail wavelet coefficients of the image. These

equations can be quickly solved by Newton’s method, so that the algorithm can rapidly compute the enhanced

detail coefficients. We will discuss the influence of the parameters tests on natural images to show that the

method is artifact free within an ample range of variability of its parameters.

1 INTRODUCTION

Digital images can present poor contrast, globally or

locally, due to many factors: wrong camera exposi-

tion or aperture settings, back-light conditions, high

dynamic range of the scene, and so on. Contrast en-

hancement can help improving detail visibility and, in

general, the overall look of the image. When we deal

with color images, the issue of contrast enhancement

is quite complex because artifact and unnatural colors

can appear after the contrast modification.

Since humans are capable of a high-quality color

vision, it is quite natural to design algorithms that

try to mimic the Human Visual System (HVS) fea-

tures in order to reach an efficient enhancement.

The algorithms built in this way are usually called

perceptually-inspired and their use can be found in

research fields as computational photography, image

quality, interior design and robotic vision to cite but a

few.

In this paper we analyze the problem of percep-

tual contrast enhancement with variational techniques

from the point of view of wavelet theory. For this

purpose we propose a functional of detail coefficients

whose minimization induces a local and multiscale

improvementof contrast. We will show that the Euler-

Lagrange equations of the functional are implicit non-

linear equations which enhance the wavelet detail co-

efficients of the image. By using Newton’s method

those equations can be quickly solved, ensuring a

global computational complexity of O (N), N being

the total number of image pixels. Moreover, the spar-

sity of the wavelet representation allows the algorithm

to be fast.

For the sake of clarity, it is worthwhile to in-

troduce here the notation that we are going to use

throughout the paper. Given a discrete RGB im-

age, we will denote by I ⊂ Z

2

its spatial domain

and by x ≡ (x

1

,x

2

) and y ≡ (y

1

,y

2

) the coordinates

of two arbitrary pixels in I. We will always con-

sider a normalized dynamic range in [0, 1], so that a

color image function will be

~

I : I → [0,1]

3

,

~

I(x) =

(I

R

(x),I

G

(x),I

B

(x)), where I

k

(x) is the intensity level

of the pixel x ∈ I in the chromatic channel k ∈

{R, G, B}. We stress that every computation will be

performed on the scalar components of the image,

thus treating independently each chromatic compo-

nent as in Retinex-like algorithms (Land and Mc-

Cann, 1971). Therefore, we will avoid the subscript

k and write simply I(x) to denote the intensity of the

pixel x in a given chromatic channel.

2 A PERCEPTUAL CONTRAST

FUNCTIONAL IN THE

WAVELET DOMAIN

In this section we shall motivate our choice for the

317

Provenzi E. and Caselles V..

AN ARTIFACT-FREE WAVELET MODEL FOR PERCEPTUAL CONTRAST ENHANCEMENT OF COLOR IMAGES.

DOI: 10.5220/0003806403170322

In Proceedings of the International Conference on Computer Vision Theory and Applications (VISAPP-2012), pages 317-322

ISBN: 978-989-8565-03-7

Copyright

c

2012 SCITEPRESS (Science and Technology Publications, Lda.)

contrast functional to be minimized in order to obtain

a perceptually-inspired contrast enhancement in the

wavelet domain.

In (Palma-Amestoy et al., 2009), the authors

proved that there exists only a type of contrast func-

tional that comply with a set of basic phenomenolog-

ical HVS properties: color constancy, i.e. the abil-

ity to perceive colors as (almost) the same indepen-

dently on the illumination conditions, locality of con-

trast enhancement, exhibited by well-knownphenom-

ena as e.g. Mach bands or simultaneous contrast, and

Weber-Fechner’s law of contrast perception, i.e. the

logarithmic response of the HVS to changes of spot

light intensity. This functional is the following

1

:

C

w

(I) =

∑

x∈I

∑

y∈I

w(x,y)

min{I(x),I(y)}

max{I(x),I(y)}

, (1)

where w : I × I → (0, +∞) is a weight function that

induces locality. The full details about why this func-

tional complies with the basic HVS features listed

above can be found in the quoted paper, here we

briefly report why the minimization of C

w

induces

contrast enhancement and how it is related to color

constancy. Regarding contrast enhancement, observe

that the function c(I(x),I(y)) =

min{I(x),I(y)}

max{I(x),I(y)}

is mini-

mized when the minimum intensity value decreases

and the maximum increases, which of course cor-

responds to a contrast intensification. The relation

with color constancy comes from the observation that

c is a homogeneous function of degree zero, i.e.

c(λI(x),λI(y)) = c(I(x), I(y)) for all λ 6= 0; in image

formation models λ is interpreted as the ‘color tem-

perature’ of the light source that illuminates a scene,

thus the homogeneity property implies that the func-

tional C

w

is able to automatically discard the color

cast induced by a global illuminant, coherently with

the HVS property of color constancy.

In this framework the function c plays the role of

basic perceptual contrast variable, a concept that in-

terested also E. Peli in (Peli, 1990). Peli generalized

the pioneering work (Hess et al., 1983) and studied

the perceptual contrast through a multiscale approach

in which, at each given scale j, he defined the percep-

tual contrast of x with respect to a neighborhoodU(x)

as a ratio, precisely

1

In the quoted paper the definition of C

w

allows an in-

creasing diffeomorphism ϕ to act on the fraction inside the

integral and the case ϕ ≡ id, id being the identity map used

here, is studied as a subcase. Since ϕ will not have any

prominent role in the present paper, we have omitted its

presence since the beginning to simplify the notation as

much as possible.

c

Peli

j,U(x)

=

g

j

∗ I(x)

h

j

∗ I(x)

(2)

where g and h are a band-pass and a low-pass filter,

respectively, of a filter bank with support in U(x) and

∗ denotes the convolution as usual. Peli’s ideas were

then embedded in the wavelet framework and used by

(Bradley, 1999) to build a wavelet-based visible dif-

ference predictor and by (Vandergheynst et al., 2000)

to implement digital watermarking. The details on the

considerations that led Peli to this definition can be

found in the previously quoted paper.

We are now going to show that the similarity be-

tween the two approaches to perceptual contrast just

described becomes even stronger if we recast the vari-

ational framework of (Palma-Amestoy et al., 2009)

into the wavelet domain.

For this purpose, let us start recalling that, fol-

lowing the classical reference book (Mallat, 2008),

an orthogonal wavelet multi-resolution analysis of

an image between two scales 2

L

and 2

J

, L,J ∈ Z,

L < J, is given by three sets of detail coefficients

{d

H

j,k

,d

V

j,k

,d

D

j,k

}

k∈I, j=L,...,J

, which correspond to the

horizontal, vertical and diagonal detail coefficients,

respectively, completed by {a

J,k

}

k∈I

, the approxima-

tion coefficients at the coarser scale. If the image

is in color, then each chromatic channel has its own

set of detail and approximation coefficients. The set

{a

J,k

}

k∈I

gives a coarse description of the image at

the scale J and it is obtained by convolution between

the image and a low pass filter. The set {d

j,k

}

k∈I

is

obtained by convolutionbetween the image and a spa-

tially localized band pass filter, so that the {d

j,k

}

k∈I

give a measure of local contrast in the image at the

scale 2

j

.

Our proposal for a perceptual contrast functional

in the wavelet domain is

C

p

j

,{a

j,k

}

({d

j,k

}) =

∑

k∈I

p

j

a

j,k

d

j,k

, (3)

where p

j

are positive coefficients that permits to mod-

ulate the strength of contrast enhancement. This def-

inition makes sense if the detail coefficients are dif-

ferent from zero, for this reason we fix a threshold

T

j

> 0 for each scale and consider only those d

j,k

sat-

isfying |d

j,k

| > T

j

; the other coefficients will be left

unchanged.

Thanks to the locality of the wavelet representa-

tion, the functional C

p

j

,{a

j,k

}

is intrinsically local and

does not need the introduction of any further weight-

ing function, which it is instead essential in the spatial

variational framework to localize the computation.

If we keep the approximation coefficients fixed

and let the other free to vary, then the minimization of

VISAPP 2012 - International Conference on Computer Vision Theory and Applications

318

a

j,k

d

j,k

corresponds to the intensification of the detail co-

efficients and thus of local contrast. Observe also that

here the basic contrast variable is

a

j,k

d

j,k

, which is still

a homogeneous function of degree 0 as in the varia-

tional framework recalled above and that, at the same

time, it is in line with the multiscale contrast inter-

pretation of Peli, since the coefficients a and d come

from low and band pass filters, respectively.

We cannot determine the enhanced detail coeffi-

cients solely by minimizing the functional C

p

j

,{a

j,k

}

because that could lead to an uncontrollable over-

enhancement of contrast, thus we have to introduce

a dispersion control term, D

d

0

j,k

, that balances the ef-

fect of C

p

j

with a conservative action that tends to

maintain the detail coefficients to their original values

{d

0

j,k

}. In (Palma-Amestoy et al., 2009) it has been

proven that a suitable choice for the dispersion term

to preserve dimensional coherence when the contrast

is a homogeneous functional of degree 0 is the en-

tropic dispersion, which in the present problem can

be written as:

D

d

0

j,k

({d

j,k

}) =

∑

k∈I

"

d

0

j,k

log

d

0

j,k

d

j,k

−

d

0

j,k

− d

j,k

#

. (4)

We then define the wavelet-based perceptually-

inspired contrast-enhancement energy as the sum of

the two previous functionals, i.e.

E

p

j

,{a

j,k

},d

0

j,k

({d

j,k

}) =

C

p

j

,{a

j,k

}

+ D

d

0

j,k

({d

j,k

}).

(5)

By setting to zero the first variation of this energy

we find its Euler-Lagrange equations, as we show in

the following proposition. Its proof is postponed to

the Appendix for the sake of a better readability of

the paper.

Proposition 2.1. The minimization of E

p

j

,{a

j,k

},d

0

j,k

gives rise to the following Euler-Lagrange equations

for the detail coefficients:

∂E

p

j

,{a

j,k

},d

0

j,k

∂{d

j,k

}

= 0 =⇒ d

j,k

= d

0

j,k

+ p

j

a

j,k

d

j,k

. (6)

We can now summarize the steps of the variational

wavelet-based algorithm for perceptual contrast en-

hancement as follows: 1) Consider the three chro-

matic components of an image independently

2

and

use the discrete wavelet transform to obtain a mul-

tiresolution analysis of each component over a cer-

tain number of scales; 2) Compute, for each scale,

2

Process color images by performing operations sepa-

rately on the three chromatic channels is common in all

Retinex-like algorithms.

the new detail coefficients (horizontal, vertical and di-

agonal) as prescribed by eq. (6) and substitute the

original with these new ones; 3) Apply the inverse

wavelet transform to obtain the filtered image. In ad-

dition to these steps, we operate a linear stretching of

the coarser approximation coefficients a

J,k

in order to

maximize the dynamic range reproduced.

The wavelet algorithm previously described has

computational complexity O (N), N being the number

of image pixels, and we implemented it in MATLAB

using the ‘wavelet toolbox’.

Besides the direct and inverse wavelet transfor-

mations, the operation that requires more time is the

iterative computation of the enhanced detail coeffi-

cients, i.e. the resolution of the implicit equation

(6). An efficient way to do that is using Newton’s

method (Fausett, 2007), initialized with the original

values d

0

j,k

. Our algorithm stops when the relative

error between two subsequent iterations is smaller

than 10

−3

and typically convergence is reached in

just two, or at maximum three, iterations. Thanks

to the quadratic convergence of Newton’s algorithm

and to the low computational cost of the discrete

wavelet transform, the wavelet algorithm is consid-

erably faster than the spatial variational algorithm of

(Palma-Amestoy et al., 2009). To have an idea about

the speed up, we report that it took only 4.98 seconds

with the MATLAB code to filter a quite large image

of dimension 922×691 over five scales, while it took

391.35 seconds to filter it with the C++ implemen-

tation of the algorithm presented in (Palma-Amestoy

et al., 2009) on the same computer. We also stress

that MATLAB is an interpreted language, so that an

optimized code for graphic card can further speed up

the wavelet algorithm in order to reach real-time per-

formances.

In the next section we shall discuss the effect of

parameters on the wavelet algorithm and its perfor-

mances on natural images.

3 TESTS

As it was presented above, the wavelet algorithm has

4 different types of parameters: 1) the threshold T

j

beyond which the wavelet coefficients are considered

significantly above 0 at the scale 2

j

; 2) the number

of scales over which the computation is performed;

3) the coefficients p

j

, 2

L

≤ 2

j

≤ 2

J

, that express how

much we permit to change the original wavelet de-

tail coefficients in each scale; 4) the mother wavelet

ψ. In the next subsections we shall discuss how the

algorithm performances are influenced by these pa-

rameters, but before that we would like to show the

AN ARTIFACT-FREE WAVELET MODEL FOR PERCEPTUAL CONTRAST ENHANCEMENT OF COLOR IMAGES

319

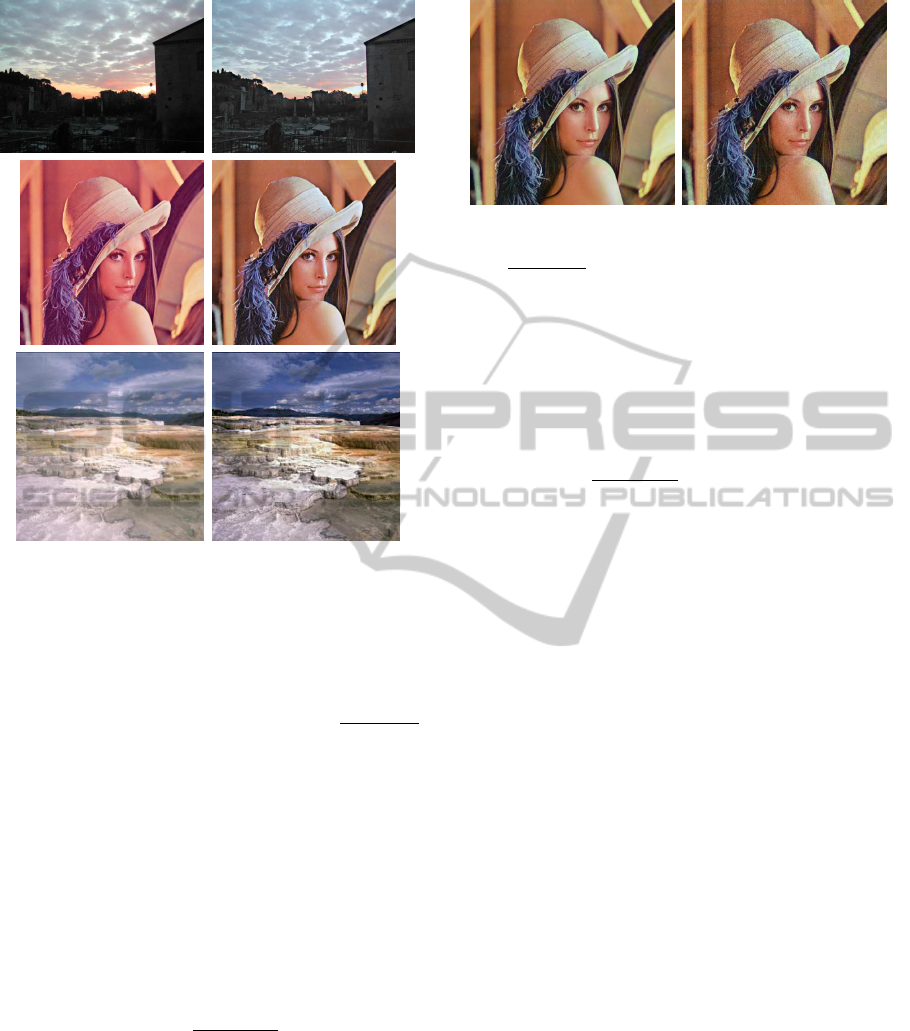

Figure 1: Images on the left: Original ones. Images on

the right: enhanced versions after the wavelet algorithm:

details appear in originally underexposed and overexposed

areas, and the pink color cast in the ‘Lena’ image is re-

moved. The filtering parameters are the following: the

mother wavelet is the Daubechies wavelet with two vanish-

ing moments, the computation is performed over the maxi-

mum number of scales allowed for each image (see Subsec-

tion 3.2 for more details), p

j

= 0.5, and T

j

=

max

k∈I

{d

j,k

}

10

for each scale 2

j

.

efficiency of the wavelet algorithm on three images

affected by distinct problems: under-exposure, color

cast and over-exposure; as can be seen in Figure 1 the

wavelet algorithm is able to perform a radiometric ad-

justment of the non-optimally exposed pictures and to

strongly reduce the color cast.

3.1 The Threshold Parameter T

j

In the computationalalgorithm we have set the thresh-

old parameter to be T

j

≡

max

k∈I

{d

j,k

}

K

, K > 1, for all the

scales 2

j

. Of course selecting K ≃ 1 we deal only with

the largest detail coefficients, while if we set K ≫ 1

we introducein the computation also the smaller ones.

Our tests have shown that an optimal value for K is 10

for every scale, in fact, selecting values of K bigger

then 10 the algorithm does not introduce significant

improvement in detail rendition but it may have the

unwanted effect to intensify the noise corresponding

to small detail coefficients. Thus, we have set once

Figure 2: From left to right: ‘Lena’ image filtered with the

wavelet algorithm with decreasing values of the threshold

T

j

=

max

k∈I

{d

j,k

}

K

corresponding to K =10 and 50, respec-

tively. We can see that when K = 50 the resulting image

is affected by noise due to the intensification of small de-

tail coefficients corresponding to noise. The other param-

eters are maintained fixed: the computation is performed

over the maximum number of scales allowed for each im-

age (see Subsection 3.2 for more details), p

j

= 0.5 for each

scale, and the mother wavelet is ‘Sym8’, the Symlet with

support a with of 15 pixels (arbitrary chosen).

and for all T

j

=

max

k∈I

{d

j,k

}

10

for all the scales 2

j

, which

means that we only deal with the detail coefficients

that lie in the same decimal order of magnitude of the

biggest ones. In Figure 2 we show the effect of de-

creasing too much the threshold T

j

.

3.2 The Number of Scales

The number of scales J − L that can be used depends

on the image dimension and the width W

ψ

of the

mother wavelet support. In fact, the maximum num-

ber of meaningful scales is the highest value of J − L

such that the following inequality holds: 2

J−L

W

ψ

≤

min{width(I),height(I)}. This value can be auto-

matically computed with the command ‘wmaxlev’ in

the MATLAB wavelet toolbox. Our tests have shown

that the best contrast enhancement performances of

the wavelet algorithm in terms of detail rendition and

elimination of color cast corresponds to the highest

number of scales allowed. For this reason we have

used the command ‘wmaxlev’ to automatically set the

number of scales over which carry on the computation

of the enhanced detail coefficients, thus eliminating

the variability of this parameter.

3.3 The Contrast Enhancement

Coefficients p

j

From eq. (6) it followsthat, if we increase the value of

the coefficients p

j

, the effect of contrast enhancement

becomes more intense. However, if we increase them

too much, contrast can be over-enhanced, resulting

in unpleasant images with unnaturally high contrast.

VISAPP 2012 - International Conference on Computer Vision Theory and Applications

320

Figure 3: From left to right: effect of increasing the coef-

ficients p

j

from 0.5 to 5, respectively. The images filtered

with p

j

= 10 have unnatural high contrast. In all the compu-

tations the other parameters are maintained fixed: the com-

putation is performed over the maximum number of scales

allowed, T

j

=

max

k∈I

{d

j,k

}

10

for each scale and the mother

wavelet is the ‘Sym8’ (arbitrarily chosen).

This effect is shown in Figure 3, where we show the

difference produced by increasing the coefficients p

j

of one order of magnitude.

In general, setting p

j

= 0.5 corresponds to over-

all good performances of the wavelet algorithm, thus

0.5 can be considered as a ‘reference value’ for the

coefficients p

j

. However, since their setting is very

intuitive, they can also be easily tuned around this

reference value by a user that may want more or less

contrast enhancement.

3.4 The Mother Wavelet ψ

Different mother wavelets ψ have, in general, differ-

ent support and symmetry properties

3

. As a conse-

quence, different mother wavelets induce different lo-

cal contrast enhancement, as can be seen in Figure 4.

How to properly choose the family of wavelet is still

an open problem that we would like to address in the

future.

Let us suppose that a give wavelet class is chosen,

then one has a further degree of freedom given by the

number of vanishing moments. These ones have a

strong relation with local contrast: it can be proved

(see (Mallat, 2008)) that the bigger is the number of

vanishing moments of ψ, the higher must be the im-

age contrast detected in the support of ψ to get de-

tail coefficients appreciably different from zero. So,

the rationale for choosing the number of moments of

a mother wavelet within the wavelet-based algorithm

discussed in this paper is the following: if a user is

interested in highlighting only high contrast regions,

then a wavelet with a high number of vanishing mo-

3

For an extensive discussion about mother wavelet prop-

erties, the interested reader is referred to chapter 7 of the

standard book (Daubechies, 1992). Moreover, MATLAB

provides information about symmetry, size of the support

and number of vanishing moments of every wavelet family

with the command ‘waveinfo’.

Figure 4: From left to right: output of the wavelet algorithm

obtained with the Daubechies and Coiflet wavelet, respec-

tively, with 4 vanishing moments. The other parameters are

maintained fixed: the computation is performed over the

maximum number of scales allowed, T

j

=

max

k∈I

{d

j,k

}

10

and

p

j

= 0.5 for each scale.

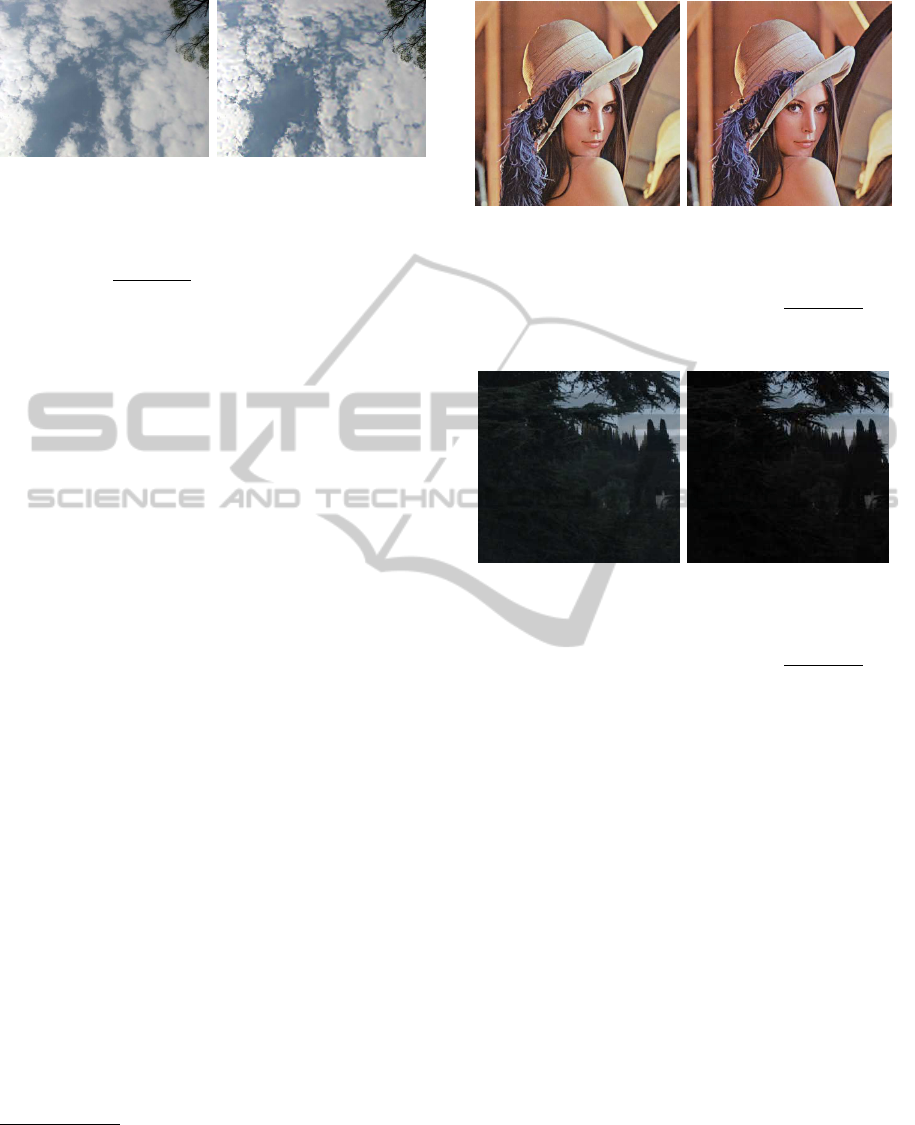

Figure 5: From left to right: output of the wavelet algo-

rithm obtained with the Daubechies wavelet with 3 and 8

vanishing moments, respectively. The other parameters are

maintained fixed: the computation is performed over the

maximum number of scales allowed, T

j

=

max

k∈I

{d

j,k

}

10

and

p

j

= 0.5 for each scale.

ments should be selected; viceversa, if one is also in-

terested in enhancing lower contrast regions, then a

smaller number of vanishing moments must be pre-

ferred. This fact is best shown in dark image zones,

as in Figure 5, where we show the effect of changing

the number of vanishing moments of the Daubechies

wavelet from 3 to 8. Coherently with what stated

above, it can be seen that the contrast enhancement

on low contrast areas provided by a wavelet with a

smaller number of vanishing moments is better since

a greater number of detail coefficients appreciably

greater than zero can be enhanced.

4 CONCLUSIONS AND

PERSPECTIVES

We have proposed a variational model of

perceptually-inspired contrast enhancement of

color images based on the wavelet representation.

The wavelet framework underlines the similarities

between the interpretation of perceptual contrast

AN ARTIFACT-FREE WAVELET MODEL FOR PERCEPTUAL CONTRAST ENHANCEMENT OF COLOR IMAGES

321

given by (Palma-Amestoy et al., 2009) and by (Peli,

1990). The new definition of perceptual contrast in

the wavelet domain proposed here permits to con-

struct a fast algorithm that can be used to intensify

contrast in color images without introducing artifacts

or unnatural colors.

The wavelet algorithm is intrinsically local and

has computational complexity O (N), N being the

number of image pixels, and it can be parallelized

in order to achieve real-time performances even for

large images, thus it could be also used to efficiently

process video sequences (e.g. to reduce flickering

or remove color cast due to film ageing). This im-

provement with respect to the variational algorithm

presented in (Palma-Amestoy et al., 2009) is pro-

vided by the sparsity of the wavelet representation and

by quadratic convergence of the Newton algorithm,

which is used to solve the implicit equations that give

the enhanced detail coefficients.

Qualitative and quantitative tests about the

wavelet-based algorithm shows that it is able to en-

hance both under and over exposed images and to re-

move color cast, as the spatial variational method of

(Palma-Amestoy et al., 2009).

The wavelet framework points out new issues

whose discussion is beyond the scope of this paper,

but that we consider interesting for future investiga-

tion: 1) What is the relation between the intrinsic

features of the mother wavelet ψ, i.e. shape, sup-

port width and symmetry, and the color normalization

abilities of the wavelet algorithm? 2) Can we devise

an analogue model by suitably apply the windowed

Fourier transform to the spatial variational algorithm

presented in (Palma-Amestoy et al., 2009)? If so, how

does that model relates to the one described in this

paper? 3) Which is the optimal selection of the coef-

ficients p

j

for contrast enhancement? 4) Can neuro-

science models of vision provide insights to properly

choose the mother wavelet ψ and the coefficients p

j

or to guide towards a more complete model?

ACKNOWLEDGEMENTS

The authors acknowledge partial support by PNPGC

project, reference MTM2006-14836, and by GRC

reference 2009 SGR 773 funded by the General-

itat de Catalunya. E. Provenzi acknowledges the

Ram´on y Cajal fellowship by Ministerio de Ciencia

y Tecnolog´ıa de Espa˜na. V. Caselles also acknowl-

edges ‘ICREA Acad`emia’ prize by the Generalitat de

Catalunya.

REFERENCES

Bradley, A. (1999). A wavelet visible difference predictor.

IEEE Trans. on Image Proc., 8:717–730.

Daubechies, I. (1992). Ten Lectures on Wavelets. SIAM.

Fausett, L. (2007). Applied Numerical Analysis Using MAT-

LAB. Prentice Hall.

Hess, R., Bradley, A., and Piotrowski, L. (1983). Contrast-

coding in amblyopia i. differences in the neural basis

of human amblyopia. Proc. R. Soc. London Ser. B 217,

pages 309–330.

Land, E. and McCann, J. (1971). Lightness and Retinex

theory. Journal of the Optical Society of America,

61(1):1–11.

Mallat, S. (2008). A Wavelet Tour of Signal Processing.

Academic Press, 3rd edition.

Palma-Amestoy, R., Provenzi, E., Bertalm´ıo, M., and

Caselles, V. (2009). A perceptually inspired varia-

tional framework for color enhancement. IEEE Trans-

actions on Pattern Analysis and Machine Intelligence,

31(3):458–474.

Peli, E. (1990). Contrast in complex images. Journal of

Opt. Society of Am. A, 7(10):2032–2040.

Vandergheynst, P., Kutter, M., and Winkler, S. (2000).

Wavelet-based contrast computation and application

to digital image watermarking. In Storage and Re-

trieval for Image and Video Databases.

APPENDIX

The computation of the first variation of D

d

0

j,k

with

respect to {d

j,k

} gives:

∂D

d

0

j,k

∂{d

j,k

}

= 1−

d

0

j,k

d

j,k

. (7)

The first variation of C

p

j

,{a

j,k

}

with respect to {d

j,k

}

gives

∂C

p

j

,{a

j,k

}

∂{d

j,k

}

= −p

j

a

j,k

(d

j,k

)

2

. (8)

Summing (7) and (8) we get

1−

d

0

j,k

d

j,k

− p

j

a

j,k

(d

j,k

)

2

= 0, (9)

multiplying by d

j,k

and simplifying the algebraic ex-

pression, we arrive to the result stated in Proposition

2.1.

VISAPP 2012 - International Conference on Computer Vision Theory and Applications

322