Blink and Wink Detection in a Real Working Environment

Dariusz Sawicki

a

, Paweł Tarnowski

b

, Andrzej Majkowski

c

,

Marcin Kołodziej

d

and Remigiusz Rak

e

Warsaw University of Technology, Warsaw, Poland

Keywords: Multimedia, Eye Winking Recognition, Safety Glasses.

Abstract: A simple and effective method of recognizing eye blinking in industrial conditions is presented. The

developed method uses a camera built into safety glasses. The presented algorithm can be applied to recognize

whether glasses are correctly put on – to check if employees use personal protective equipment. Recognition

of open or closed eyes allows control by intentional winking. The algorithm uses only light sources present

in the workplace and does not require infrared radiation (IR). The solution was tested on a set of 1797 eye

photos recorded in a group of 10 participants. An analysis of the correctness of blink recognition and the

correctness of the algorithm's operation in various lighting conditions was carried out. Experiments showed

that the proposed algorithm met required project assumptions. The averaged results of blink recognition

obtained using the developed method are: accuracy 96.5%, precision 93.8%, specificity 98.9% and sensitivity

84.9%. Additionally the algorithm is insensitive to changes in lighting and allows the use of one type of

glasses for different employees.

1 INTRODUCTION

1.1 Motivation

Ensuring work safety is one of the most important

tasks in industrial conditions. Eyes are particularly

vulnerable to injuries. In industrial conditions, our

eyes are exposed to many potentially dangerous

factors: mechanical, chemical, biological and optical.

The eyes can be protected by appropriate safety

glasses or protective goggles. In selected conditions,

full face protective gear can also be used. The use of

personal protective equipment is strictly required

(Bartkowiak, et al., 2012) in many workplaces.

However, not in all of the required situations do

employees use personal protective equipment

(Workers Fail to Wear, 2011). Unfortunately, this

happens often, despite restrictions. This is the result

of low awareness of threats (despite training),

individual negligence and disregard for regulations.

A very important problem arises that should be

solved: how to check whether an employee correctly

a

https://orcid.org/0000-0003-3990-0121

b

https://orcid.org/0000-0003-0392-4084

c

https://orcid.org/0000-0002-6557-836X

d

https://orcid.org/0000-0003-2856-7298

e

https://orcid.org/0000-0003-0674-453X

uses his/her protective glasses. To check this, we can

propose different sensors to measure the parameters

(Kowalczyk and Sawicki, 2019). We can measure:

distances between glasses and nearest surfaces;

temperature in the environment of the glasses

(looking for human body temperature); the color of

the nearest surface (looking for skin color); and

vibration (looking for heart rate). All these

parameters can inform us as to whether glasses are

correctly applied. However, this type of analysis is

impractical – too many additional factors interfere

with the correct assessment. As a result, it can only be

performed in laboratory conditions, and not in

industrial ones. It seems that an effective assessment

could be made on the basis of eye image registration

and blink recognition. For this, an effective algorithm

for eye blink detection is required; an algorithm that

can work in connection with safety glasses in

industrial conditions.

On the other hand, work in industrial conditions is

often supported by additional equipment. Computers,

monitors or other displays can facilitate the work, can

78

Sawicki, D., Tarnowski, P., Majkowski, A., Kołodziej, M. and Rak, R.

Blink and Wink Detection in a Real Working Environment.

DOI: 10.5220/0008479800780085

In Proceedings of the 3rd International Conference on Computer-Human Interaction Research and Applications (CHIRA 2019), pages 78-85

ISBN: 978-989-758-376-6

Copyright

c

2019 by SCITEPRESS – Science and Technology Publications, Lda. All rights reserved

provide additional information, and give the

possibility of additional control. However, the

employee's hands are most often occupied with the

activities being performed. In this situation, the best

solution is a touchless interface using eye gestures,

head gestures or other body language (Evans et al.,

2000, Kim and Ryu, 2006). The use of eye gestures is

not a new idea. Eye gesture, eye tracking and gaze

tracking (oculography) can be used in many areas of

human activity, including human-computer

interaction (HCI) (Duchowski, 2007, Singh and

Singh, 2012). A survey of eye blinking applications

in HCI can be found in (Majaranta and Bulling, 2014,

Singh and Singh, 2018). There are also many

applications were a wearable technology is proposed

for multimodal HCI. For example, a touchless

computer control method based on analysis of head

movement and eye gestures is presented in (Sawicki

and Kowalczyk, 2018). This solution has been

patented (Kowalczyk and Sawicki, 2017) and allows

replacement of the standard mouse in effective way.

A good solution for industrial environments would be

to use eyeglasses to recognize eye gestures, because

it would not limit the employee's movements in the

workplace.

1.2 The Aim of the Article

A very important problem in industrial environment

is to check whether an employee correctly uses

protective glasses. Analysis of eye image would give

the possibility to combine both functions considered

here: safety glasses with the ability to control with the

help of eye gestures and head movements. The key

problem in this case is the correct and effective

recognition of eye blinking for such an application.

The main aim of this paper is to develop a simple and

effective method that allows recognition of eye

blinking in industrial conditions using sensors built

into glasses.

2 BLINK DETECTION FOR EYE

GESTURES: THE SHORT

SURVEY OF SOLUTIONS

The most popular method for blinking detection is the

visual analysis of a facial image. It is also the oldest

method. When an image is captured using a camera,

we can step by step recognize individual elements. In

the first step, we isolate the face shape, in the second

the eye region and individual eye parts. In the third

step, we try to detect eye state: open or closed. in this

way, blinking / winking can be detected. In the last

step, various methods are applied. Most popular are

those based on pupil detection (Kim et al., 2011).

These methods include: statistical methods

(Bacivarov et al., 2008), methods based on image

comparison to templates (Grauman et al., 2001), use

of principal component analysis (PCA) (Le and Liu,

2013), or use of median filter in shape analysis (Lee

et al., 2010). The main disadvantages of these

methods are their high sensitivity to changes in

lighting conditions or to changes in the position of the

camera relative to the eye pupil.

To solve the problem of the external lighting

conditions, an additional source of infrared radiation

(IR) is applied. In paper (Kapoor and Picard, 2001),

IR LEDs and a proper IR camera allow analysis of an

image independently of the lighting conditions. An

effective method based on IR for blinking and

winking detection is presented in (Kowalczyk and

Sawicki, 2019). This method also includes a ready

algorithm for replacing the mouse keys with eye

gestures. There are also many interesting commercial

solutions where eye blinking and/or gaze are

recognized. A system of eye blinking detection using

IR can be applied for communication by disabled

people (Blink-It, 2018). Driver fatigue can also be

recognized on the basis of blinking analysis (and IR

LEDs and cameras): see (Kojima, 2001), and (Driver

Monitoring Technology, 2018) for an idea and a

commercial solution, respectively.

3 INDUSTRIAL CONDITIONS

AND THE MAIN

ASSUMPTIONS OF THE

PROJECT

The use of an additional IR source and camera allows

for practically error-free blink recognition

(Kowalczyk and Sawicki, 2019). It is documented

that infrared radiation with a wavelength greater than

1400 nm does not penetrate the retina of the eye

(Wolska, 2013). Additionally, the emissions from the

IR source should not exceed 100W/m

2

at the retinal

level. It is assumed that IR radiation with such

parameters is safe for the human eye (Wolska, 2013).

However, even such low parameters are not accepted

during continuous operation in industrial conditions.

In industrial conditions, no IR source is accepted in

close proximity to the eye. Therefore, we were

looking for an algorithm that uses only light sources

present at the workplace.

Blink and Wink Detection in a Real Working Environment

79

A camera that recognizes blinking will be placed

close to the eye in the frame of the glasses. Thanks to

this, typical errors in the interpretation of facial

images are eliminated: the camera will register the

correct image of the eye (always the same) although

any position of the user's head and direction of the

eyes in any direction is possible. It also does not

matter if there is partial covering of the user's head or

face; provided, of course, that the lighting is not be

completely obscured. The algorithm we are looking

for should meet the following conditions:

The proposed algorithm should allow correct

recognition of the eye state (closed or open); it

means recognition of eye blinking as well as

intentional winking.

It should work correctly with any position of the

pupil and gaze direction.

It should work correctly at close distances between

camera and eye. Cameras should be placed in the

frame of a pair of glasses. It cannot obscure the

wearer's field of vision. This means that a wide-

angle lens will be used; it will be characterized by

large distortions (perspective and non-linear). In

this situation, we cannot expect, for example, that

the pupil will have a round shape. Such an

assumption is often adopted in the analysis of an

eye image.

The proposed algorithm should be insensitive to

changes in lighting. In industrial conditions, good

lighting of the workplace is required. However,

there are different zones: lighter and darker (with

a soft shadow). In addition, usually a lot of

different light sources are mounted. This means

that reflections (flashes) appear on the surface of

the open eye.

It should work correctly when use only light

sources present at the workplace. The special

sources of light (mounted LEDs), especially IR

will not be allowed.

The proposed algorithm should be as simple as

possible and should work fast when applied on

simple microcontroller. The application should

work effectively in real time.

The adopted assumptions regarding distortions of

the eye image are very important, they allow the use

of one type of glasses (with a specific camera setting)

with different employees. There will be no

requirement for an initial calibration for each

individual employee before starting work. On the

other hand, such an assumption means that solutions

similar to those used in eyeglasses with IR sources

cannot be considered. That is, the use of the analysis

of luminance levels at specific, precisely defined

points of the image (e.g. along a specific image

section) is not accepted.

4 ALGORITHM FOR EYE

BLINK/WINK DETECTION

The proposed Algorithm is as follows:

A1. Download the RGB image from the

camera.

A2. Convert the RGB image into a

monochrome image (Image_Mono)

with a relatively small resolution

(about 600 x 400).

A3. Apply Gaussian blur to Image_Mono

and determine Image_Gauss.

A4. Determine the differential image:

Image_Diff =

255 - Image_Mono + Image_Gauss.

A5. Apply thresholding and transform

Image_Diff into binary

form Image_Bin.

A6. Calculate the measure of detail MD.

MD = the sum of all pixel values of

Image_Bin.

A7. Test the closing / opening of the

eye

If MD > = MofOE,

then the eye is closed.

If MD < MofOE,

then the eye is open.

Where MofOE is a Measure of the Open Eye. The

value of this parameter was determined

experimentally based on a series of photos taken for

different people.

The algorithm uses the observation that the image

of the closed eye in fact contains much less detail than

the image of the open eye. In the image of the open

eye, we can see many different elements: pupil, iris,

whites, eyelids (independently lower and upper), and

eyelashes. These elements and the boundaries of

areas related to these elements (and differences in

contrast between them) create a rich set of details.

They are emphasized after subtracting the blurred

image (Image_Gauss). On the other hand, the image

of the closed eye is primarily a large area of the

eyelids – the area in which the image of skin with a

very similar color (gray level) dominates. This is an

area without differences, borders, contrasts and

details. In the image of the closed eye, we cannot see

the elements of the eye; the only elements apart from

the eyelids might be eyelashes, and possibly skin

wrinkles at the corners of the eyes. Of course, in

practice, the camera will not always capture the image

of a perfectly closed eye. However, the state of eye

CHIRA 2019 - 3rd International Conference on Computer-Human Interaction Research and Applications

80

during blinking (not completely closed) also differs

significantly in terms of detail from the image of the

open eye. The more closed the eye, the smaller the

area of the elements of the open eye becomes – and

the more the area of the eyelids dominates.

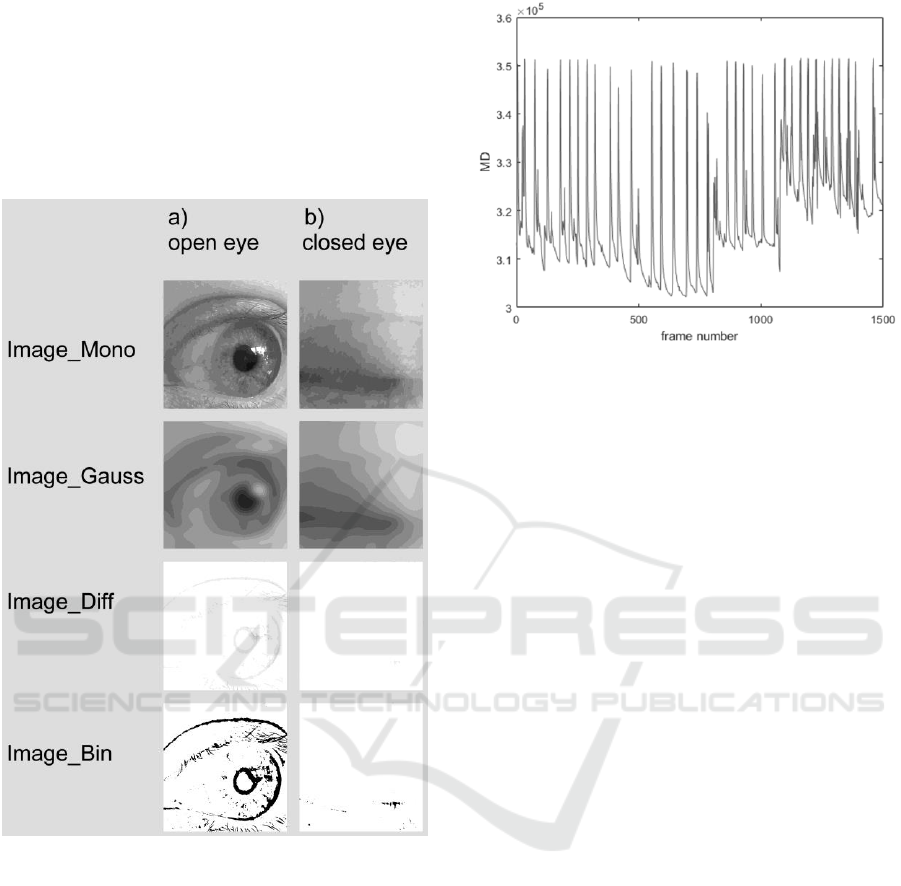

Consecutive images corresponding to individual

stages of the algorithm's implementation are

presented in Figure 1.

Figure 1: Images of eye in consecutive stages of proposed

algorithm: a) open eye b) closed eye.

The Gaussian blur parameter (stDev = 20), the

binarization threshold (0.95) and the change of the

value of MofOE were experimentally determined

based on a series of 15,000 photos taken for 10

different people. An example graph of the sum of

pixel values (MD) of Image_Bin for one person is

shown in Figure 2.

Figure 2: An MD chart for one person for subsequent

registered images.

5 BLINK DETECTION FOR EYE

MODEL OF GLASSES AND

PERFORMED TESTS

5.1 The Model of Glasses

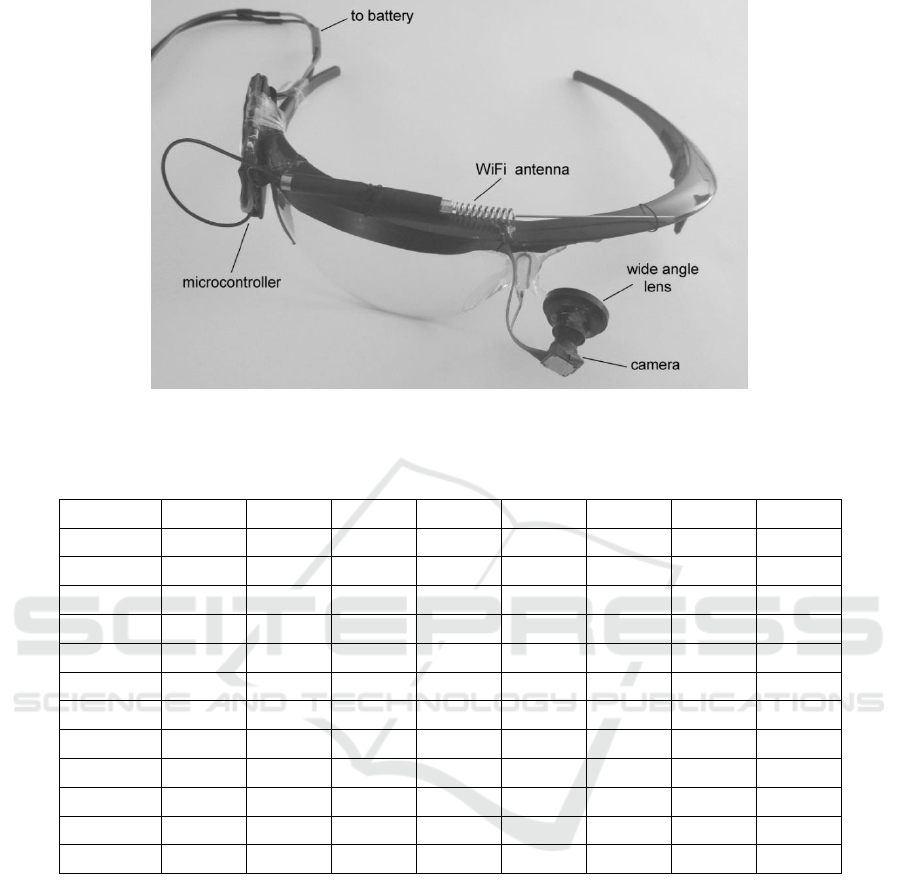

We have developed and manufactured a model for

glasses. Original industrial safety glasses were used.

We did not have a sufficiently small camera with a

wide-angle lens. Therefore, we cut out the surface of

one glass in the glasses, mounted the camera there

and equipped it with an additional wide-angle lens

(Figure 3). In this way, the camera is positioned close

to the nose and covers the image of the entire eye

from a very close distance. This corresponds to the

situation of placing the microcamera in the frame of

glasses in the target solution. The specific setting (at

the corner of the eye), the small distance and the wide

angle of the lens mean that the image of the eye can

differ significantly from the typical image of the eye

which we get by looking at the face from a sufficient

distance (“full face” view).

5.2 Conducted Tests

We conducted tests using large set of photos. We

have recorded 1797 images of eyes (closed and open)

in experiment in which 10 participants took part: 3

women aged 40 to 50 (average age 45) and 7 men

aged 29 to 55 (average age 40). Each participant

blinked spontaneously (in a natural way) for 1 minute.

The images were recorded by a camera attached to the

frame of the glasses. The participants could move

Blink and Wink Detection in a Real Working Environment

81

Figure 3: Prepared model of glasses used in our experiments.

Table 1: Results of tests True Positive (TP), False Negative (FN), True Negative (TN), False Positive (FP), Accuracy (ACC),

Precision (PREC), Specificity (SPEC), Sensitivity (SENS).

Participant

TP

FP

TN

FN

ACC

PREC

SPEC

SENS

1.

15

1

739

6

0.991

0.938

0.999

0.714

2.

40

3

729

0

0.996

0.930

0.996

1.000

3.

37

0

730

3

0.996

1.000

1.000

0.925

4.

19

0

733

15

0.980

1.000

1.000

0.559

5.

19

1

736

8

0.988

0.950

0.999

0.704

6.

19

1

739

3

0.995

0.950

0.999

0.864

7.

16

2

736

10

0.984

0.889

0.997

0.615

8.

35

0

733

0

1.000

1.000

1.000

1.000

9.

40

1

729

1

0.997

0.976

0.999

0.976

10.

18

8

737

0

0.990

0.692

0.989

1.000

All

258

17

1476

46

0.965

0.938

0.989

0.849

Mean

25,8

1,7

733,95

4,6

0.992

0.932

0.998

0.836

their head. In this case, the camera changed its

position according to the movements of the head and

the image was registered correctly. On the other hand,

movements of the head caused changes in the face

lighting, resulting in images of the eye with slightly

different histograms. However, the use of the

proposed algorithm gave very similar final images

(Image_Bin).

The images for analysis were pre-selected. As the

blink detection algorithm was being tested, the test

data should be unambiguous. Therefore, all images

where state of the eye was ambiguous were deleted.

A set of images was prepared for the analysis, on

which the eye was correctly closed or correctly open.

5.3 Analysis of the Performed Tests

We carried out an analysis of the performed

experiments. The results in the form of calculated

parameters are presented in Table 1. True Positive

(TP) means that the algorithm correctly recognized

blinking – eye as closed. True Negative (TN) means

that the algorithm correctly recognized the eye as

open. False Positive (FP) means that the algorithm

incorrectly recognized blinking (open eye recognized

as closed). False Negative (FN) means that the

algorithm incorrectly recognized an open eye (closed

eye recognized as open).

Analyzing the results, it can be concluded that the

algorithm recognized the state of the eye very well.

CHIRA 2019 - 3rd International Conference on Computer-Human Interaction Research and Applications

82

There were very few mistakes, as evidenced by the

high values of the determined parameters in Table 1.

We noticed an interesting position in Table 1.

Participant 4. FN=15. She was a woman with heavy

make-up. The shiny eyelid reflected lights and bright

objects. This could be qualified as a large number of

details, so the algorithm could recognize the eye as

open. The only solution for this case is the hope that

employees at the workplace do not use heavy make-

up. An individualized threshold setting could help.

It is worth emphasizing the properties of the

proposed algorithm. The properties that are consistent

with the assumptions and that are relevant to the

future application.

Experiments have shown that the algorithm is

insensitive to camera settings. It is only important that

the camera covers the image of the eye (smaller or

larger, placed in the image in any position). The

rotation of the camera is also irrelevant. The

algorithm is not sensitive to the perspective projection

method. This is very important, because the location

of the camera in the corner of the glasses, very close

to the eye, can cause large distortions.

The proposed algorithm is not sensitive to flashes

appearing due to the reflection of light (or very bright

objects) at the surface of the eye. What is more, the

reaction to flashes becomes an advantage of this

algorithm. In practice, flashes can arise only on the

surface of an open eye – adding in this case further

details. And the more details there are, the easier it is

to recognize an open eye in the proposed algorithm.

Similar reflections will be not created at the surface

of the eyelid, which has light-scattering properties.

The algorithm is practically insensitive to the

level of illumination. The Gaussian blur defines the

average brightness level of the image. The details that

remain after subtracting the blur have a level of

brightness not much different from the average and at

the same time are also dependent on the average level

of brightness. We conducted an analysis of the

experiment cases when the participant's face was

illuminated in different ways. In addition, we

conducted a series of experiments deliberately setting

different levels and positions of lighting. The results

of blink recognition were consistent with the results

for the same participant in experiments carried out

under typical/average (and also correct) lighting

conditions. In 95% of cases the algorithm worked

correctly. In other cases (5%) it was necessary to

manually correct the threshold level. Experiments

have shown that, for different levels of eye lighting,

the brightness levels of Image_Diff images are very

similar. This is consistent with the results described

in article (Le et al., 2010), where thresholding was

also used, but a median filter was applied.

6 THE NEW SOLUTION:

APPLICATION APPROACH

There already exists a lot of systems in vehicles that

analyze eye blink frequency to assess driver's fatigue.

They are effectively used in vehicles where the

position of the driver's head is fixed. But at

workplaces in industry it doesn’t happen. The

proposed solution (camera built into the frame of

glasses) solves the problem in any position of head

and allows for effective use in industrial

environments as well.

Industrial conditions place specific requirements

on the working of a discussed algorithm. In addition,

the algorithm is designed to analyze images from a

camera built into the frame of a pair of glasses. This

means additional conditions that result from the

specific projection that occurs while recording

images with a camera. A preliminary analysis of

known solutions showed that it is very difficult to use

pre-existing solutions. It is also difficult to match a

known solution to the set requirements.

The proposed simple solution allows recognizing

the blinking effectively. As a result, the eye state

(closed or open) can be correctly analyzed. It is

sufficient for effective diagnosis of fatigue (Caffier et

al., 2003, Galley et al., 2004). Good results can be

achieved by using PERCLOS parameter (Sommer

and Golz, 2010). PERCLOS is defined as the

percentage of time when the pupil is obscured by the

eyelid to degree greater than 80% (Wierwille et al.,

1994).

It is worth noting that no IR source is required in

close proximity to the eye. This is very important in

real, industrial conditions. The lack of IR source also

distinguishes the proposed solution from many well-

known methods, including patented one (Kowalczyk

and Sawicki, 2017).

On the other hand, it seems that to check whether

workers wear safety glasses, very simple methods can

be used. For example tactile sensors to measure

distance between frame of glasses and body.

Unfortunately, these methods work properly only in

ideal laboratory conditions and cannot be used in real

industrial conditions (Kowalczyk and Sawicki,

2019). In addition, the analysis of eye blinking does

not allow for any fraud attempt in practical situations.

Blink and Wink Detection in a Real Working Environment

83

7 CONCLUSIONS

The aim of the research was to develop a simple

algorithm for eye state recognition working in

industrial applications. A solution has been proposed

based on the fact that many more details are shown in

the image of an open eye than in an image of a closed

eye. An algorithm was introduced in which Gaussian

blur is applied. Then, using a differential comparison,

an image is prepared in which the pixel values

determine the measure of details for the image of the

eye.

We have also built the model of the glasses in

which the proposed algorithm was tested. The

solution was tested on a large set of eye photos. The

pictures were recorded in a group of 10 participants.

The accuracy of eye state recognition was 96.5%.

This was a very good result that allows for application

in the assumed conditions. Experiments have shown

that the proposed algorithm works correctly in

conditions of changeable lighting. The algorithm also

works correctly for the specific working conditions of

the camera – position very close to the eye and

application of a wide-angle lens. In this way, the

required project assumptions have been met.

The algorithm allows correct recognition of the

eye state (closed or open). This recognition is not

affected by the opening time and closing time.

Therefore, the algorithm allows the identification of

spontaneous blinking as well as intentional winking.

In this way, it can be applied to the applications that

were considered: for recognition of whether glasses

are correctly put on and for control by eye blinking.

In the future, we plan to try to extend the

algorithm with the possibility of automatically

adjusting the threshold (parameter MofOE –

Measure of the Open Eye) – without experimental

analysis on a large set of photos. We are also planning

to use a special microcamera that will allow it to be

built into the frame of the glasses.

ACKNOWLEDGEMENTS

This paper has been based on the results of a research

project carried out within the framework of the fourth

stage of the National Programme "Improvement of

Safety and Working Conditions" partly supported in

2017–2019 within the framework of research and

development by the Ministry of Labour and Social

Policy. The Central Institute for Labour Protection –

National Research Institute is the Programme's main

coordinator.

REFERENCES

Bacivarov, I., Ionita, M., Corcoran, P., 2008. Statistical

models of appearance for eye tracking and eye-blink

detection and measurement. IEEE Transactions on

Consumer Electronics. 54(3). 1312–1320. DOI:

http://dx.doi.org/10.1109/TCE.2008.4637622.

Bartkowiak, G. et al., 2012. Use of Personal Protective

Equipment in the Workplace. In: Handbook of Human

Factors and Ergonomics, fourth ed. John Wiley &

Sons, Inc. DOI: http://dx.doi.org/10.1002/9781

118131350.ch30

Blink-It – system for environment control and

communication for entirly disabled people.

http://www.ober-consulting.com/13/lang/1/ last

accessed 20 December 2018.

Caffier, P., Erdmann, U., Ullsperger, P., 2003.

Experimental evaluation of eyeblink parameters as a

drowsiness measure. European Journal of Applied

Physiology, 89(3/4), May 2003, 319-325. DOI:

http://dx.doi.org/10.1007/s00421-003-0807-5

Driver Monitoring Technology. In: Automotive, World's

best driver monitoring technology that enhances safety

in real time. https://www.seeingmachines.com/

industry-applications/automotive/ last accessed 20

December 2018.

Duchowski, A., 2007. Eye tracking methodology. Theory

and practice. sec. ed. Londyn: Springer.

Evans, D.G., Drew, R., Blenkhorn, P., 2000. Controlling

Mouse Pointer Position Using an Infrared Head-

Operated Joystick. IEEE Transactions on

Rehabilitation Engineering, 8(1), 107–117.

Galley, N., Schleicher, R., Galley, L., 2004. Blink

parameter as indicators of driver’s sleepiness –

Possibilities and limitations. Vision in Vehicles, 10,

189-196.

Grauman, K., Betke, M., Gips, J., Bradski, G., 2001.

Communication via eye blinks - detection and duration

analysis in real time. In: Proc. of IEEE CVPR, Kauai,

HI, USA. 2001, pp. 1010–1017, DOI:

http://dx.doi.org/10.1109/CVPR.2001.990641.

Kapoor, A., Picard, R.W., 2001. A real-time head nod and

shake detector. In: Proc. 2001 Workshop on perceptive

user interfaces. November 2001. pp. 1-5. DOI:

http://dx.doi.org/10.1145/971478.971509.

Kim H., Ryu D., 2006. Computer control by tracking head

movements for the disabled. In: Proc. of the ICCHP

’06. In: Lecture Notes in Computer Science, 4061,

pp.709–715, Springer.

Kim, D., Choi, S., Choi, J., Shin, H., Sohn, K. 2011. Visual

fatigue monitoring system based on eye-movement and

eye-blink detection. In: Proc. SPIE 7863, Stereoscopic

Displays and Applications XXII, 786303. DOI:

http://dx.doi.org/10.1117/12.873354.

Kojima, N., Kozuka, K., Nakano, T., Yamamoto, S. 2001.

Detection of consciousness degradation and

concentration of a driver for friendly information

service. In: Proc. of the IEEE International Vehicle

Electronics Conference, Tottori, Japan. pp. 31-36. DOI:

http://dx.doi.org/10.1109/IVEC.2001.961722.

CHIRA 2019 - 3rd International Conference on Computer-Human Interaction Research and Applications

84

Kowalczyk, P., Sawicki, D., 2017. Computer controlling

device, (in Polish: Urządzenie do sterowania

komputera), Patent Number PL402798, granted on 7

June 2017, by Polish Patent Office.

Kowalczyk, P., Sawicki, D., 2019. Blink and wink

detection as a control tool in multimodal interaction.

Multimedia Tools and Applications. 78(10), 13749–

13765. DOI: http://dx.doi.org/10.1007/s11042-018-

6554-8.

Lee, W.O., Lee, E.C., Park, K.R., 2010. Blink detection

robust to various facial poses. Journal of Neuroscience

Methods. 193(2), 356–372.

Le, H., Dang, T., Liu, F., (2013). Eye blink detection for

smart glasses. In: Proc. of 2013 IEEE International

Symposium on Multimedia. pp.305-308. DOI:

http://dx.doi.org/10.1109/ISM.2013.59

Majaranta, P., Bulling, A., 2014. Eye Tracking and Eye-

Based Human–Computer Interaction. In: Fairclough,

S., Gilleade, K. (eds) Advances in Physiological

Computing. Human–Computer Interaction Series. pp.

39-65. Springer. DOI: http://dx.doi.org/10.1007/978-1-

4471-6392-3_3

Sawicki, D., Kowalczyk, P., 2018. Head Movement Based

Interaction in Mobility. International Journal of

Human–Computer Interaction. 34(7), 653-665. DOI:

http://dx.doi.org/10.1080/10447318.2017.1392078.

Singh, H., Singh, J. 2012. Human eye tracking and related

issues: a review. International Journal of Scientific and

Research Publications. 2(9), 1-9.

Singh, H., Singh, J., 2018. Real-time eye blink and wink

detection for object selection in HCI systems. Journal

on Multimodal User Interfaces, 12(1), 55-65. DOI:

http://dx.doi.org/10.1007/s12193-018-0261-7

Sommer, D., Golz, M., 2010. Evaluation of PERCLOS

based Current Fatigue Monitoring Technologies. In:

Proc. of the 32nd Annual International Conference of

the IEEE EMBS, Buenos Aires, Argentina, August 31 -

September 4, 2010, DOI: http://dx.doi.org/10.1109/

IEMBS.2010.5625960

Wierwille W, Wreggit, S.S., Kirn, C.L., Ellsworth, L.A.,

Fairbanks, R.J., 1994. Research on vehicle-based driver

status / performance monitoring: development,

validation, and refinement of algorithms for detection

of driver drowsiness. Report No DOT HS 808 247.

NHTSA, Washington D.C. https://catalog.hathitrust.

org/Record/005519249 last accessed 01 August 2019.

Wolska, A., 2013. Artificial optical radiation – principles of

occupational risk assessment (in Polish). Central

Institute for Labour Protection - National Research

Institute, Warsaw Poland.

Workers Fail to Wear Required Personal Protection

Equipment. In: Sustainable Plant, By Objective.

http://www.sustainableplant.com/2011/07/workers-

fail-to-wear-required-personal-protection-equipment/

last accessed 20 December 2017.

Blink and Wink Detection in a Real Working Environment

85